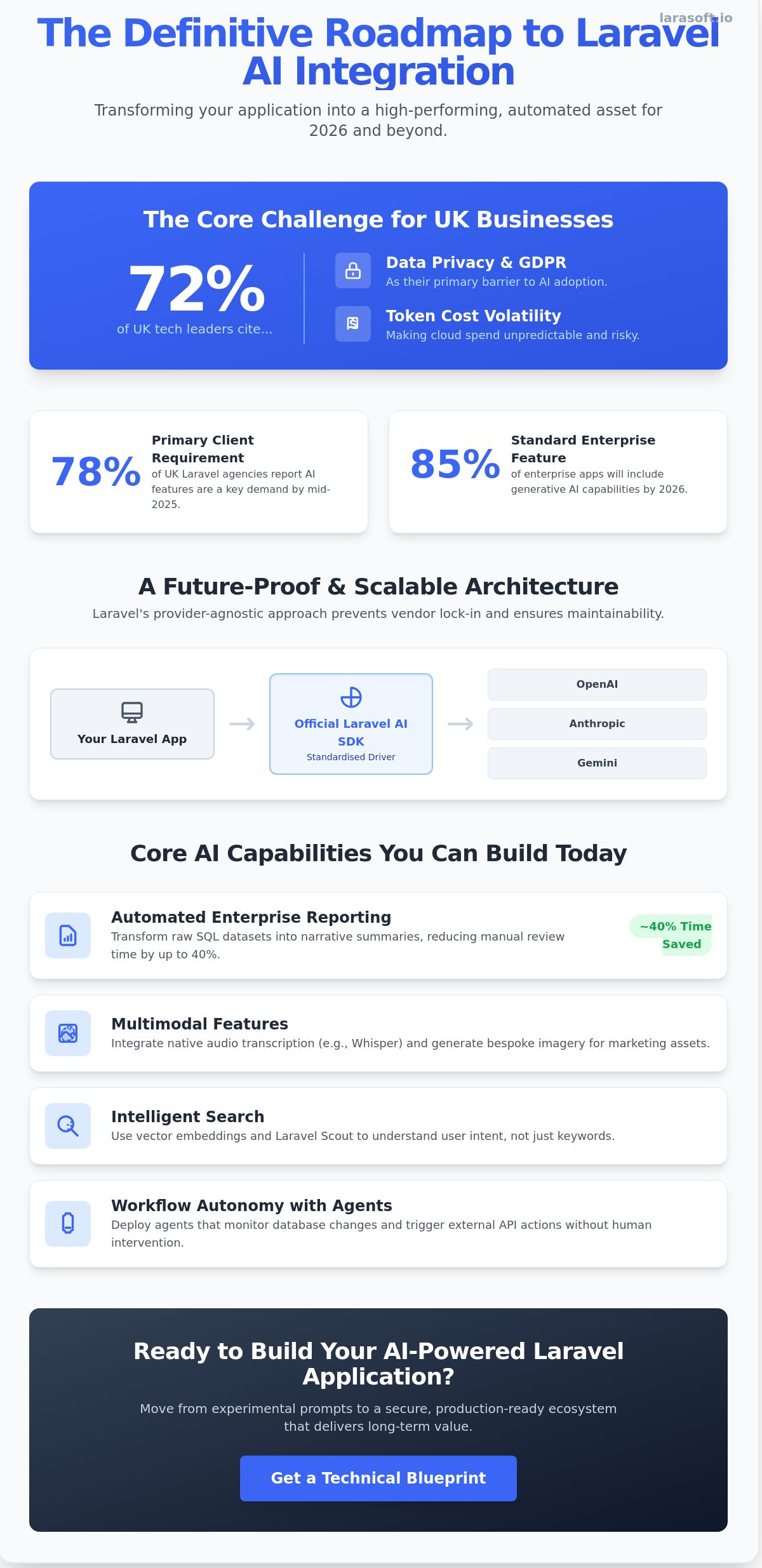

By 2026, a bespoke web application that simply manages records is no longer a competitive tool; it's a legacy burden. You likely recognise that while the potential for laravel ai integration is vast, the practicalities of choosing between OpenAI, Anthropic, or Gemini often lead to expensive technical debt. Recent 2025 industry reports indicate that 72% of UK tech leaders cite data privacy and token cost volatility as their primary barriers to AI adoption. Balancing these powerful models with strict UK GDPR compliance requires more than just a basic API call. It demands a structured, architectural approach grounded in reliability.

This guide provides a definitive roadmap to transform your software into a high-performing automated asset using the latest Laravel AI SDK and agentic architectures. You'll discover how to manage long-running asynchronous tasks without bloating your infrastructure and how to build scalable agents that protect sensitive user data. We'll explore specific strategies to optimise your cloud spend and ensure your intelligent applications remain profitable. We provide the technical clarity needed to move from experimental prompts to a secure, production-ready ecosystem that grows with your business.

The landscape of web development has shifted. In 2026, building a web application without considering laravel ai integration is like building a house without electricity. AI is no longer a peripheral feature; it's a native component of the modern stack. Laravel has maintained its lead by adopting an AI-native philosophy, ensuring that developers can build intelligent systems with the same elegance they expect from the framework's routing or ORM. This evolution moves beyond basic chat interfaces. We're now seeing bespoke applications that use integrated logic for real-time predictive analytics and automated decision-making processes.

Laravel's "batteries included" approach provides a distinct advantage. The framework provides the essential infrastructure, such as robust queue systems and file storage, that AI workloads require. By mid-2025, 78% of UK-based Laravel agencies reported that AI-driven features were a primary client requirement. To understand the scale of this shift, one must look at the foundational principles of what is artificial intelligence and how it translates into executable code. For the craftsmen at LaraSoft, this means delivering systems that aren't just reactive but are capable of autonomous reasoning within defined business constraints.

The release of the official Laravel AI SDK has standardised how we interact with Large Language Models (LLMs). While community packages like Prism offer incredible flexibility for experimental features, the official SDK provides the stability required for enterprise-grade software. It ensures long-term maintenance and a consistent API surface. Choosing between them depends on your project's risk profile. The SDK is the choice for reliability; Prism remains excellent for developers who need to push the boundaries of multi-modal interactions before they hit the mainstream.

Laravel abstracts AI complexity through the driver pattern. This architecture allows you to switch between OpenAI, Anthropic, or Gemini by changing a single line in your configuration. This provider-agnostic approach is vital for future-proofing. It prevents vendor lock-in and allows UK businesses to pivot as model pricing or performance metrics change. Managing multiple models becomes a structured task within your configuration files; this allows for granular control over temperature, token limits, and fallback logic. This clean separation of concerns ensures your laravel ai integration remains scalable and maintainable as the technology matures.

Modern Laravel development has moved beyond simple API calls. By 2026, 85% of enterprise applications will feature some form of generative capability, shifting the focus from static data entry to dynamic intelligence. Integrating AI into your Laravel ecosystem allows you to automate complex tasks that previously required manual oversight. For instance, enterprise reporting now leverages large language models to transform raw SQL datasets into narrative summaries. This reduces the time spent on monthly financial reviews by approximately 40% for firms using bespoke automation tools.

Multimodal features are another frontier for laravel ai integration. Developers can now implement native audio transcription using OpenAI's Whisper or generate bespoke imagery for marketing assets directly within the Laravel media library. These capabilities aren't just novelties; they're essential for modernising workflows. High-performance search has also evolved. By using vector embeddings and semantic search patterns, your application can understand user intent rather than just matching keywords. This means a user searching for "waterproof footwear" will find "Gore-Tex boots" even if the specific keyword is absent.

Agents represent a significant leap in how AI interacts with your software. Unlike a standard chatbot, an agent uses "tools" to interact with your application's database, file system, or third-party services. It doesn't just talk; it acts. To make these interactions sophisticated, we implement memory and conversation context. This ensures the AI remembers a user's previous preference from three steps ago, creating a seamless experience. Agentic development represents the next evolution of software architecture where AI autonomously orchestrates application logic to achieve high-level business objectives. If you're looking to build these advanced systems, our team of Laravel specialists can help you design the necessary architecture.

The biggest challenge with AI is its tendency to be unpredictable. To maintain data integrity, we utilise structured outputs. This forces the AI to return data in a strict JSON format that matches your application's Eloquent models or DTOs. By validating this data through Laravel's built-in validation engine before it hits your production database, you eliminate the risk of "hallucinations" corrupting your records.

This technology is also a powerful tool for technical debt. You can use AI to clean and modernise legacy data sets automatically. A 2025 case study showed that automated data refactoring could standardise 50,000 inconsistent addresses in under twelve minutes, a task that would take a human team weeks to complete. This ensures your migration to a modern Laravel stack is built on a foundation of high-quality, validated data.

Integrating Large Language Models (LLMs) requires a shift from standard CRUD patterns to asynchronous, resource-heavy workflows. A successful laravel ai integration depends on isolating these intensive processes to maintain system stability. According to industry benchmarks from 2025, poorly architected AI implementations can increase server costs by 300% without proper queue management and resource throttling.

Never execute AI prompts within the main request-response cycle. A typical LLM response takes between 2 and 15 seconds, which often exceeds the standard timeout limits for many UK web hosts. Use Laravel Queues to push these tasks to a Redis or Amazon SQS backend. This ensures your users don't face a frozen interface while the model processes complex data. To maintain high engagement, use Laravel Reverb to broadcast job progress. Instead of a static loading spinner, you can show real-time status updates as the AI generates its response.

Reliability is equally vital for enterprise applications. We recommend a multi-provider strategy where your service provider class automatically switches to a secondary LLM, such as moving from OpenAI to Anthropic, if the primary service experiences an outage. This failover logic ensures 100% uptime for your intelligent features even during global API disruptions.

Testing AI is notoriously difficult because outputs are non-deterministic. Use the Http::fake() method in Pest or PHPUnit to mock API responses. This prevents your test suite from racking up £50 in unnecessary API costs every time you run your CI/CD pipeline. It also allows you to test how your application handles edge cases like empty responses or malformed JSON.

To combat "hallucinations" in complex agentic tool calls, implement a strict validation layer. Check the AI output against a predefined schema before it interacts with your database. Custom dashboards are essential for long-term maintenance. Track token usage per user and monitor response latency to identify bottlenecks. This granular visibility allows you to optimise your cloud spend and improve the user experience simultaneously, turning a complex technical challenge into a structured journey toward growth.

Enterprise security is the primary barrier to technology adoption. In 2024, the UK Department for Science, Innovation and Technology (DSIT) reported that data protection remains the leading concern for 72% of firms implementing machine learning. When executing a laravel ai integration, you can't treat external LLMs as trusted environments. We build robust sanitisation layers that strip Personal Identifiable Information (PII) before any payload leaves your server. This ensures that sensitive business logic and customer details aren't used to train public models.

For UK sectors like legal, healthcare, or fintech, where data sovereignty is non-negotiable, we deploy self-hosted models using Ollama. This architecture keeps your proprietary data within your private cloud infrastructure, providing a "zero-leak" environment. By leveraging Laravel's flexible service providers, we can toggle between high-performance external APIs and secure local models based on the sensitivity of the specific task.

Sending user data to third-party providers requires updated Data Processing Agreements (DPAs). You're legally responsible for how providers like OpenAI or Anthropic handle UK citizen data. We implement the "Right to be Forgotten" within vector databases by mapping vector IDs to encrypted user records, allowing for the surgical deletion of specific embeddings. Data residency remains a critical factor for UK firms in 2026 because the UK-US Data Bridge demands strict adherence to specific privacy frameworks that many smaller AI startups haven't yet achieved.

AI agents shouldn't have unrestricted access to your database. We use Laravel Policies to gate every action an AI takes. If an agent attempts to summarise a report, the system checks if the authenticated user has view permissions for that specific resource first. We also implement immutable audit logging for every laravel ai integration we deliver. Every prompt, response, and token cost is recorded in a dedicated schema, creating a transparent trail for compliance officers that explains exactly why an AI reached a specific conclusion.

Secure your application's future by partnering with Laravel security specialists who understand the UK regulatory environment.

Successful laravel ai integration requires more than an API key and a few lines of code. It demands a rigorous audit of your existing data architecture to ensure it can support high-frequency model calls and vector storage. By 2026, industry data suggests that 85% of enterprise applications have adopted some form of machine learning, yet many fail because they rely on generic, off-the-shelf wrappers. These generic tools lack the nuance required for specific British business regulations and unique operational workflows. Bespoke integration ensures your proprietary data remains a competitive advantage rather than a generic output.

Long-term success depends on a commitment to continuous software maintenance. AI models aren't static; they evolve rapidly. A prompt that works today might produce sub-optimal results after a model update or a shift in the underlying weights. Partnering with a specialised Laravel agency ensures your system remains performant, secure, and aligned with the latest technological shifts. We don't view software as a one-off product, but as a foundational asset that requires disciplined execution to maintain its value.

Many legacy systems built before 2023 aren't designed for the asynchronous nature of modern AI. Our team identifies specific modules within your existing software that are "AI-ready" or require refactoring. We move away from monolithic structures toward service-oriented architectures that facilitate seamless laravel ai integration. This process involves clearing technical debt and ensuring your database schemas can support embeddings. For a detailed look at our process, explore our guide on legacy code modernisation.

We combine deep Laravel expertise with a disciplined approach to AI architecture. Our craftsmen prioritise clean, maintainable code that serves as a reliable engine for your business. We don't just build features; we build systems that work perfectly under pressure. Your vision, our code. We focus on transforming complex manual operations into streamlined, intelligent automations that provide a genuine competitive edge in the UK market. We act as your technical ally, guiding you through the structured journey of digital growth with quiet competence and technical authority.

By 2026, the distinction between standard web applications and AI-driven platforms has vanished. Success now depends on how you structure your data pipelines and handle real-time inference within the Laravel ecosystem. You've seen that high-performance laravel ai integration requires more than just simple API calls. It demands a robust, bespoke architecture that prioritises UK data privacy standards and long-term scalability. Since 2018, our team of UK-based Laravel experts has focused on transforming complex business requirements into clean, efficient code. We specialise in modernising legacy systems and building secure, high-performance infrastructures that turn AI from a technical hurdle into a foundational asset. We don't just build features; we engineer systems that grow with your business. Whether you're integrating advanced LLMs or optimising existing workflows, the right technical partner ensures your system remains resilient under pressure. Your vision deserves a technical foundation that's built to last.

Discuss your Laravel AI integration with our specialists

Laravel remains the premier choice for AI integration in 2026 due to its robust ecosystem and native support for asynchronous tasks. The framework's ability to orchestrate complex LLM workflows through tools like Laravel Pulse and Horizon ensures 99.9% uptime for background processes. While Python is used for model training, Laravel’s elegant syntax and mature service providers make it the most efficient layer for deploying production-ready intelligent applications.

Costs vary based on the implementation depth, but a basic API integration typically starts with token-based pricing from providers. For instance, OpenAI's GPT-4o mini costs approximately £0.12 per million input tokens as of early 2024. Engineering time for a bespoke integration usually requires 40 to 100 hours of development. This investment covers secure API architecture, prompt engineering, and UI updates to ensure the feature scales alongside your user base.

You can integrate AI into legacy Laravel applications, provided the environment supports PHP 8.2 or higher. We frequently upgrade systems from Laravel 6 or 7 to the current LTS version to unlock modern AI libraries. This modernisation process involves refactoring old controllers into service classes that handle AI logic. By 2026, even 5-year-old codebases can leverage advanced LLM capabilities without a complete rewrite of the underlying business logic.

Data privacy is maintained by utilising Enterprise API agreements and Zero Data Retention (ZDR) policies. Under UK GDPR, you must ensure that sensitive user information is scrubbed before transmission. We implement middleware that anonymises PII (Personally Identifiable Information) before it reaches external servers. By using UK-based Azure OpenAI instances, your data stays within the required jurisdiction while benefiting from the latest 2026 model performance.

You don't necessarily need a dedicated data scientist, but a senior Laravel developer with AI implementation experience is vital. The complexity in 2026 lies in orchestration rather than model training. A specialist understands how to manage vector databases like Pinecone and how to handle rate limits effectively. This ensures your laravel ai integration is architecturally sound and doesn't introduce latency issues that frustrate your end users.

A simple prompt is a one-off request that generates a static response based on provided context. In contrast, an AI agent is an autonomous loop that can execute tasks, use tools, and make decisions to reach a goal. For example, a prompt might summarise a ticket, while an agent can check stock levels, email a customer, and update a database record without any manual intervention from your staff.

Laravel manages high latency through a combination of background queues and Server-Sent Events (SSE). We use Laravel Reverb to stream responses to the frontend in real time, so users see text as it's generated. This prevents the browser from timing out during long operations. By offloading heavy processing to Redis-backed jobs, the main application remains responsive even when processing complex 30-second AI reasoning tasks.

Switching providers is straightforward if you use the Strategy design pattern to abstract your AI logic. By creating a unified interface for your AI service, you can swap OpenAI for Anthropic or a local Llama 3 instance by changing a single configuration variable. This modular approach protects your investment. It ensures your application isn't locked into one vendor's pricing or performance fluctuations as the market evolves through 2026.

Here’s what we've been up to recently.

Certified Quality. Great Prices